By Yan Cui

Threats to the security of our serverless applications take many forms. Some are old foes we have faced before. Some are new. And some have taken on new forms in the serverless world.

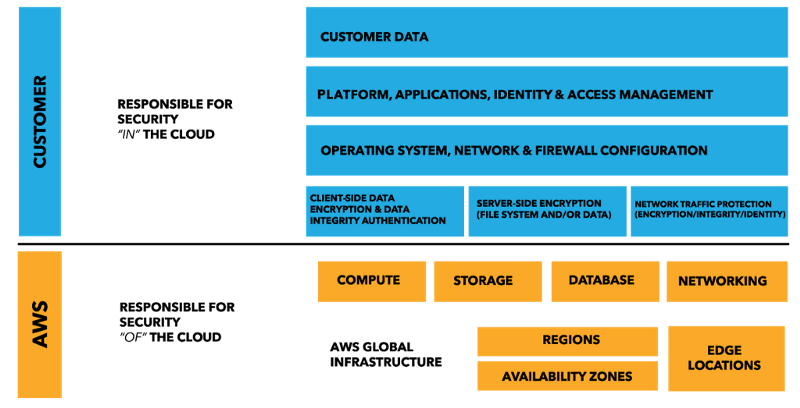

As we adopt the serverless paradigm, we delegate even more operational responsibilities to our cloud providers. With AWS Lambda, you no longer have to configure AMIs, patch the OS, and install monitoring daemons. AWS takes care all that for you.

What does this mean for the Shared Responsibility Model that has long been the cornerstone of security in the AWS cloud?

Protection from attacks against the OS

AWS takes over the responsibility for maintaining the host OS as part of their core competency. This alleviates you of the rigorous task of applying all the latest security patches. This is something most of us don’t do a good enough job of, as it’s not our primary focus.

In doing so, it protects us from attacks against known vulnerabilities in the OS and prevents attacks such as WannaCry.

By removing long-lived servers from the picture, we are also removing the threats posed by compromised servers that live in our environment for a long time.

WannaCry happened because the MS17–017 security patch was not applied to the affected hosts.

WannaCry happened because the MS17–017 security patch was not applied to the affected hosts.

However, it is still our responsibility to patch our application and address vulnerabilities that exist in our code and our dependencies.

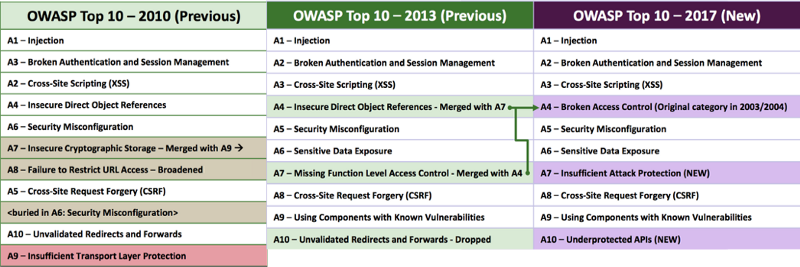

OWASP top 10 is still as relevant as ever

Aside from a few reclassifications, the OWASP top 10 list has largely stayed the same in 7 years.

Aside from a few reclassifications, the OWASP top 10 list has largely stayed the same in 7 years.

A glance at the OWASP top 10 for 2017 shows us familiar threats. Injection attacks, Broken Authentication, and Cross-Site Scripting (XSS) still occupy the top spots seven years on.

A9 — Components with Known Vulnerabilities

When the folks at Snyk looked at a dataset of 1792 data breaches in 2016, they found that 12 of the top 50 data breaches were caused by applications using components with known vulnerabilities.

Furthermore, 77% of the top 5000 URLs from Alexa include at least one vulnerable library. This is less surprising than it first sounds when you consider that some of the most popular front-end js frameworks — eg. jQuery, Angular and React — all had known vulnerabilities. It highlights the need to continuously update and patch your dependencies.

Unlike OS patches, which are standalone, trusted and easy to apply. Security updates to 3rd party dependencies are often bundled with feature and API changes that need to be integrated and tested. It makes our life as developers difficult. It’s yet another thing we have to do when we’re working overtime to ship new features.

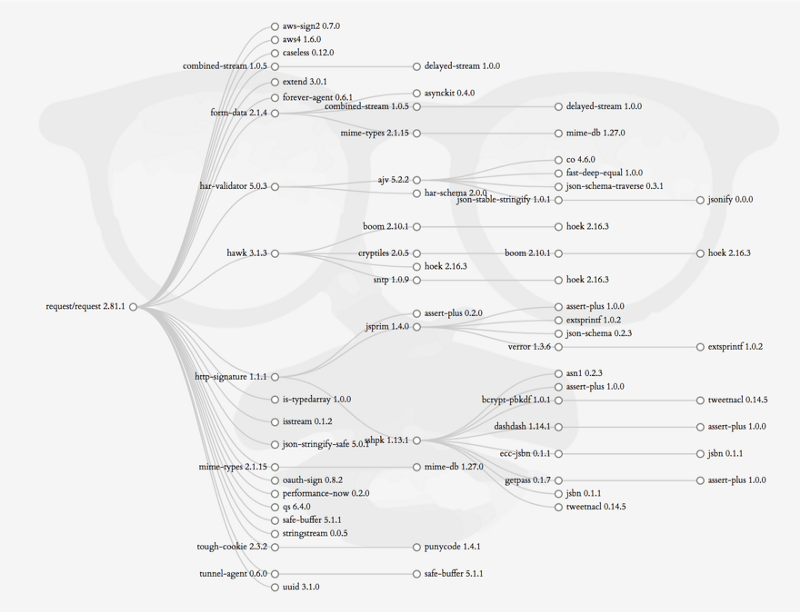

And then there’s the matter of transient dependencies. If these transient dependencies have vulnerabilities, then you too are vulnerable through your direct dependencies.

_[https://david-dm.org/request/request?view=tree](https://david-dm.org/request/request?view=tree" rel="noopener" target="blank" title=")

_[https://david-dm.org/request/request?view=tree](https://david-dm.org/request/request?view=tree" rel="noopener" target="blank" title=")

Finding vulnerabilities in our dependencies is hard work and requires constant diligence. Which is why services such as Snyk is so useful. It even comes with a built-in integration with Lambda, too!

Attacks against NPM publishers

What if the author/publisher of your 3rd party dependency is not who you think they are?

What if the author/publisher of your 3rd party dependency is not who you think they are?

Last year, a security bounty hunter managed to gain direct push rights to 14% of NPM packages. The list of affected packages include some big names too: debug, request, react, co, express, moment, gulp, mongoose, mysql, bower, browserify, electron, jasmine, cheerio, modernizr, redux and many more. In total, these packages account for 20% of the total number of monthly downloads from NPM.

Let that sink in for a moment.

Did he use sophisticated methods to circumvent NPM’s security?

Nope, it was a combination of brute force and using known account and credential leaks from a number of sources including Github. In other words, anyone could have pulled these off with very little research.

It’s hard not to feel let down by these package authors when so many display such a cavalier attitude towards securing access to their NPM accounts.

662 users had password «

123456», 174 — «123», 124 — «password».1409 users (1%) used their username as their password, in its original form, without any modifications.

11% of users reused their leaked passwords: 10.6% — directly, and 0.7% — with minor modifications.

As I demonstrated in my talk on Serverless security, you can steal temporary AWS credentials by adding a few lines of code.

Imagine, then, a scenario where an attacker had managed to gain push rights to 14% of all NPM packages. He could publish a patch update to all these packages and steal AWS credentials at a massive scale.

The stakes are high and it’s possibly the biggest security threat we face in the serverless world. And, it also impacts applications running inside EC2 or containers.

The problems and risks with package management are not specific to the Node.js ecosystem. I have spent most of my career working with .Net and now Scala, and package management has been a challenge everywhere. We need package authors to exercise due diligence towards the security of their accounts.

A1 — Injection & A3 — XSS

SQL injection and other forms of injection attacks are still possible in the serverless world. As are Cross-Site Scripting (XSS) attacks.

Even if you’re using NoSQL databases you might not be safe from injection attacks either. MongoDB, for instance, exposes a number of attack vectors through its query APIs.

DynamoDB’s more rigid API makes an injection attack harder. But you’re still open to other forms of exploits. For example, XSS and leaked credentials which grant attacker access to DynamoDB tables.

Nonetheless, you should always sanitize user inputs, as well as the output from your Lambda functions.

A6 — Sensitive Data Exposure

Along with servers, web frameworks are also redundant when you move to the serverless paradigm. These web frameworks have served us well for many years. But they also handed us a loaded gun we can shot ourselves in the foot with.

Troy Hunt demonstrated how we can accidentally expose all kinds of sensitive data by leaving directory listing options ON. From web.config containing credentials (at 35:28) to SQL backups files (at 1:17:28)!

With API Gateway and Lambda, accidental exposures like this are very unlikely. Because directory listing becomes a “feature” you’d have to implement yourself. It forces you to make a conscious decision about when to support directory listing, and the answer is likely never.

IAM

If your functions are compromised, the next line of defense is to restrict what the compromised functions can do.

This is why you need to apply the Least Privilege Principle when configuring Lambda permissions.

In the Serverless framework, the default behaviour is to use the same IAM role for all functions in the service.

However, the serverless.yml spec allows you to specify a different IAM role per function. But it involves a lot more development effort and adds enough friction that almost no one does this.

Thankfully, Guy Lichtman created a plugin for the Serverless framework called serverless-iam-role-per-function. This plugin makes applying per function IAM roles much easier. Follow the instructions on the Github page and give it a try yourself.

You should apply per-function IAM policies.

You should apply per-function IAM policies.

IAM policy not versioned with Lambda

A shortcoming with Lambda and IAM configuration is that IAM policies are not versioned with the Lambda function.

If you have multiple versions of the same function in active use (perhaps with different aliases), then it becomes problematic to add or remove permissions:

- Adding permissions to a new version allows older versions more access than they need

- Removing permissions from a new version can break older versions that still need those permissions

Before 1.0, this was a common problem with the Serverless framework because it used aliases to implement stages. Since 1.0, this is no longer a problem, because each stage is deployed as a separate function. For example:

service-function-devservice-function-stagingservice-function-prod

This means only one version of each function is active at any moment in time. Except when you use aliases during a canary deployment.

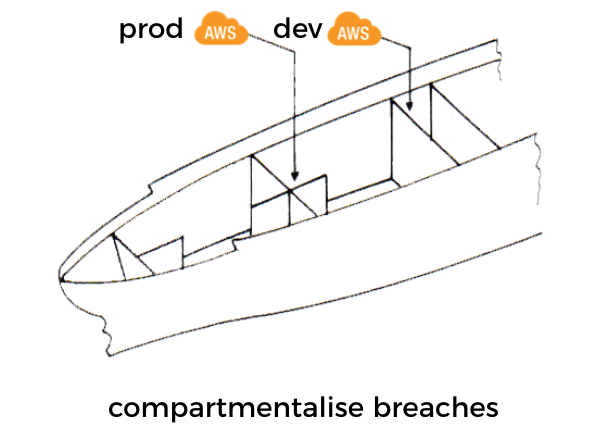

Account level isolation can also help mitigate the problems of adding/removing permissions. This isolation also helps compartmentalize security breaches. For example, a compromised function in a non-production account cannot be used to gain access to production data.

We can apply the same idea of bulkheads (which has been popularised in the microservices world by Michael Nygard’s “Release It”) and compartmentalize security breaches at an account level.

We can apply the same idea of bulkheads (which has been popularised in the microservices world by Michael Nygard’s “Release It”) and compartmentalize security breaches at an account level.

Delete unused functions

One of the benefits of the serverless paradigm is that you don’t pay for functions when they’re not used.

The flip side is that you have less incentive to remove unused functions since they don’t cost you anything. However, these functions still exist as attack surfaces. They are also more dangerous than active functions because they’re less likely to be updated and patched. Over time, these unused functions can become a hotbed for known vulnerabilities that attackers can exploit.

Lambda’s documentation also cites this as one of the best practices.

Delete old Lambda functions that you are no longer using.

The changing face of DoS attacks

With AWS Lambda, you are far more likely to scale your way out of a Denial-of-Service (DoS) attack. However, scaling your serverless architecture aggressively to fight a DoS attack with brute force has a significant cost implication.

No wonder people started calling DoS attacks against serverless applications Denial of Wallet (DoW) attacks!

“But you can just throttle the no. of concurrent invocations, right?”

Sure, and you end up with a DoS problem instead… it’s a lose-lose situation.

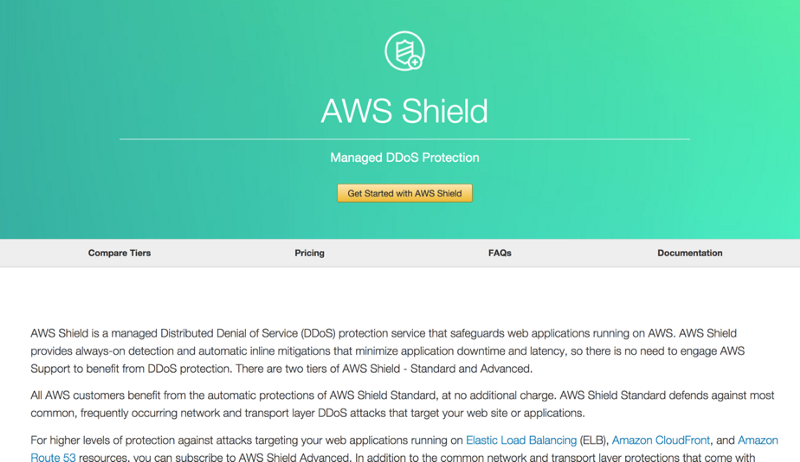

Of course, there is AWS Shield. For a flat fee, AWS Shield Advanced gives you payment protection in the event of a DoS attack. But at the time of writing, this protection does not cover Lambda costs.

For a monthly flat fee, AWS Shield Advanced gives you cost protection in the event of a DoS attack, but that protection does not cover Lambda yet.

For a monthly flat fee, AWS Shield Advanced gives you cost protection in the event of a DoS attack, but that protection does not cover Lambda yet.

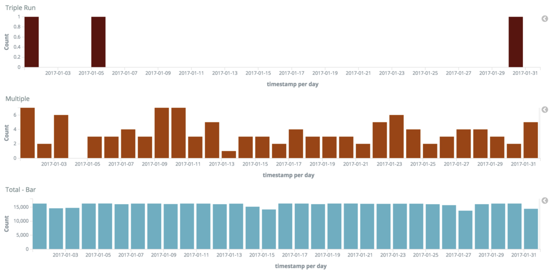

Also, Lambda has an at-least-once invocation policy. According to the folks at SunGard, this can result in up to three (successful) invocations. From the article, the reported rate of multiple invocations is extremely low, at 0.02%. But one wonders if the rate is tied to the load and might manifest itself at a much higher rate during a DoS attack.

Taken from the “Run, Lambda, Run” article mentioned above.

Taken from the “Run, Lambda, Run” article mentioned above.

Furthermore, you need to consider how Lambda retries failed invocations by an asynchronous source. For example, S3, SNS, SES, and CloudWatch Events.

Officially, these invocations are retried twice before they’re sent to the assigned Dead Letter Queue (DLQ) if one is configured. However, an analysis by OpsGenie showed that the number of retries can go up to as many as 6 before the invocation is sent to the DLQ.

If the DoS attacker is able to trigger failed async invocations then they can magnify the impact of their attack.

For example, if your application allows the client to update a file to S3 for processing. Then the attacker can DoS you by uploading large numbers of invalid files that will cause your functions to error and retry.

All these add up to the potential for the actual number of Lambda invocations to explode during a DoS attack. As we discussed earlier, while your infrastructure might be able to handle the attack, can your wallet stretch to the same extent? Should you allow it to?

Securing external data

Just a handful of the places you could be storing state outside of your stateless Lambda function.

Just a handful of the places you could be storing state outside of your stateless Lambda function.

Due to the ephemeral nature of Lambda functions, chances are all your functions are stateless. More than ever, states are stored in external systems and we need to secure them both at rest and in-transit.

Communication to all AWS services happens via HTTPS and every request is signed and authenticated. A handful of AWS services also offer server-side encryption for your data at rest. For example, S3, RDS and Kinesis streams spring to mind. Lambda also has built-in integration with KMS to encrypt environment variables.

Recently DynamoDB has also announced support for encryption at-rest.

The same diligence needs to be applied when storing sensitive data in services/databases that do not offer built-in encryption. In the case of a data breach, it provides another layer of protection for your users’ data.

We owe our users that much.

Use secure transport when transmitting data to and from services (both external and internal ones). If you’re building APIs with API Gateway and Lambda then you’re forced to use HTTPS by default, which is a good thing. However, API Gateway endpoints are always public, you need to take the necessary precautions to secure access to internal APIs.

You should use IAM roles to protect internal APIs. It gives you fine-grained control over who can invoke which actions on which resources. Using IAM roles also spares you from awkward conversations like this:

“It’s X’s last day, he probably has our API keys on his laptop somewhere, should we rotate the API keys just in case?”

“Hmm.. that’d be a lot of work, X is trustworthy, he’s not gonna do anything.”

“Ok… if you say so… (secretly prays that X doesn’t lose his laptop or develop a belated grudge against the company)”

Fortunately, this can be easily configured using the Serverless framework.

Leaked credentials

Don’t become an unwilling bitcoin miner.

Don’t become an unwilling bitcoin miner.

The internet is full of horror stories of developers racking up a massive AWS bill after their leaked credentials are used to mine bitcoins. For every such story, many more have been affected but chose to stay silent. For the same reason, many security breaches are not disclosed publicly as companies do not want to lose face.

Even within my small social circle, I know of two such incidents. Neither were made public and both resulted in over $100k worth of damages. Fortunately, in both cases AWS agreed to cover the cost.

AWS scans public Github repos for active AWS credentials and tries to alert you as soon as possible. But even if your credentials were public for a brief moment, it might not escape the watchful gaze of attackers. Plus, they still exist in Git commit history unless you rewrite the history, too. If your credentials came into the public domain then it’s best to deactivate the credentials as soon as possible.

A good approach to prevent AWS credential leaks is to use Git pre-commit hooks as outlined by this post.

From what I hear, attackers are most likely to launch EC2 instances in the Sao Paulo and Tokyo regions. You can use CloudWatch event patterns and Lambda to alert you when there are EC2 API calls in regions you’re not using. That way, you can at least react more quickly when your credentials are leaked.

Conclusions

We looked at a number of security threats to our serverless applications in this post. Many of them are the same threats that have plighted the software industry for years. All the OWASP top 10 still apply to us, including SQL, NoSQL, and other forms of injection attacks.

Leaked AWS credentials remain a major issue and can impact any organisation that uses AWS. Whilst there are quite a few publicly reported incidents, I have a strong feeling that the actual number of incidents are much much higher.

We are still responsible for securing our users’ data both at rest as well as in-transit. API Gateway is always publicly accessible, so we need to take the necessary precautions to secure access to our internal APIs, preferably with IAM roles. IAM offers fine-grained control over who can invoke which actions on your API resources, and make it easy to manage access when employees come and go.

On a positive note, having AWS take over the responsibility for the security of the host OS gives us a number of security benefits:

- Protection against OS attacks, because AWS can do a much better job of patching known vulnerabilities in the OS

- Host OSs are ephemeral which means no long-lived compromised servers

With API Gateway and Lambda, you don’t need web frameworks to create an API anymore. Without web frameworks, there is no easy way to support directory listing. But, that’s a good thing, because it makes a directory listing a concise design decision. No more accidental exposure of sensitive data through misconfiguration.

DoS attacks have taken a new form in the serverless world. While you’re able to scale your way out of an attack, it’ll still hurt you in the wallet instead. Lambda costs incurred during a DoS attack is not covered by AWS Shield Advanced at the time of writing.

Meanwhile, some new attack surfaces have emerged with AWS Lambda:

- Functions are often over-permissioned. A compromised function can do more harm than it might otherwise.

- Unused functions are often left around for a long time, because there is no cost penalty. But attackers can exploit them. They’re also more likely to contain known vulnerabilities since they’re not actively maintained.

Above all, the most worrisome threat for me are attacks against the package authors themselves. Many authors do not take the security of their accounts seriously. This endangers themselves as well as the rest of the community that depends on them. It’s difficult to guard against such attacks and erodes one of the strongest aspect of any software ecosystem — the community behind it.

Once again, people have proven to be the weakest link in the security chain.