By Per Harald Borgen

_Click here to get to the course._

_Click here to get to the course._

The last few years, machine learning has gone from a promising technology to something we’re surrounded with on a daily basis. And at the heart of many machine learning systems lies neural networks.

Neural networks are what’s powering self-driving cars, the world’s best chess players, and many of the recommendations you’re get from apps like YouTube, Netflix, and Spotify.

So today I’m super-stoked to finally present a Scrimba course that helps any web developer easily get started with neural networks.

This is the very first machine learning on Scrimba, but certainly not the last!

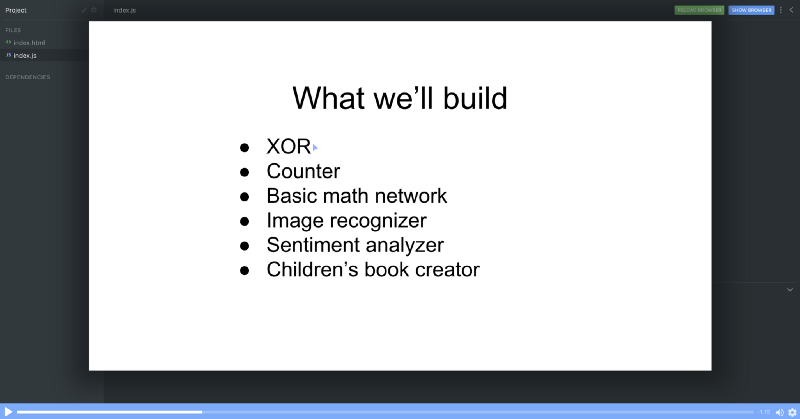

In the course, Robert Plummer teaches you how to use his popular Brain.js library through solving a bunch of exciting problems, such as:

- recognizing images

- analyzing the sentiment of sentences

- and even writing very simple children’s book!

And thanks to the Scrimba platform, you’ll be able to directly interact with the example code and modify it along the way.

This may be the most interactive course on neural networks ever created.

So let’s have a look at what you’ll learn throughout these 19 free screencasts.

1. Introduction

Robert starts with giving you an overview over the concepts you’ll learn, projects you’ll build and the overall pedagogical philosophy behind the course. It’s a practical course which focuses on empowering people to build rather than getting stuck in the theoretical aspects behind neural nets.

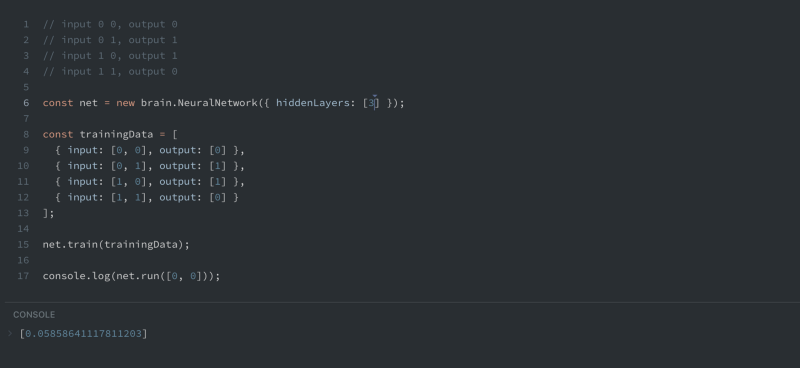

2. Our first neural net!

In this lecture, we’ll jump into the code and start coding immediately. Robert takes you through building an XOR net, which is the simplest net possible to build. Within two minutes of lectures, you’ll have watched your first neural network being coded.

You’ll also be encouraged to play around with the net yourself, by simply pausing the screencast, editing the values, and then running the net for yourself!

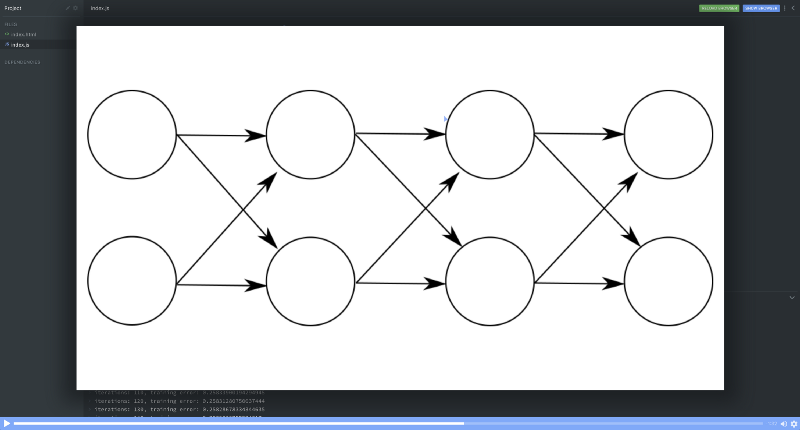

3. How do they learn? Propagation

Robert continues the course with a little bit of theory. In this lecture, he explains the concepts of forward propagation and back propagation, which both are at the core of neural nets.

He uses a simple example to explain the concepts in a way everyone can understand.

Robert also gives a quick intro to the error function, which is another key component of neural nets, as the error tells the net how far off its predictions are during training.

4. How do they learn? Part 2 — Structure

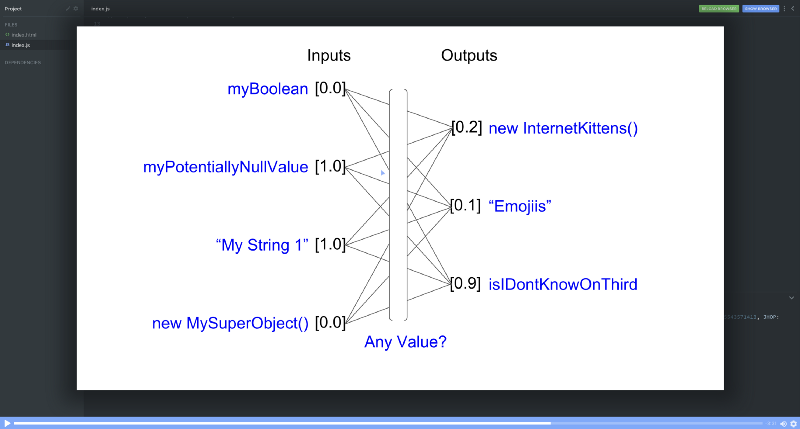

In this lecture, Robert explains a few more concepts. More specifically, he explains the underlying structure of neural nets.

- inputs & outputs

- random values

- activation functions (“relu”)

He also provides a couple of links you can use if you’re interested in diving a bit deeper into these concepts. But with this being a practical course rather than a theoretical one, he quickly moves on.

5. How do they learn? Part 3 — Layers

Now it’s about time to get familiar with layers. So in this lecture, Robert gives you an overview over how to configure Brian.js layers and why layers are important.

Robert also highlights how simple the calculations inside the neurons of a feedforward network are. If you’re curious and want to learn more about this, you can follow the links he shares towards the end of this lecture.

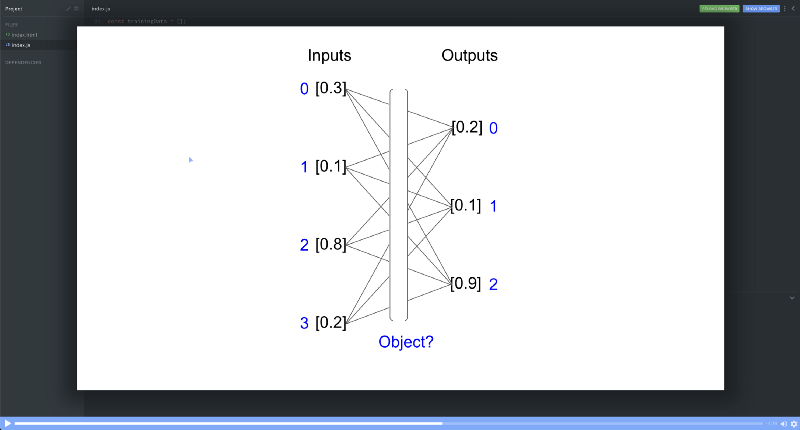

6. Working with objects

Brain.js also has a nice feature which allows it to work with objects. So in this tutorial, Robert explains how to do exactly that. To illustrate how it works, he creates a neural network which predicts the brightness of colors based upon how much red, green and blue they contain.

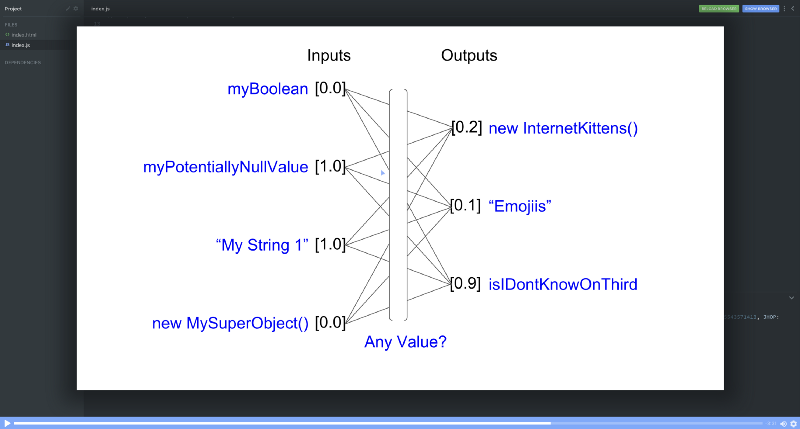

7. Learning more than numbers

When you want to solve problems in the real world you often times have to deal with values which aren’t numbers. However, a neural net only understands numbers. So that presents a challenge.

Luckily though, Brain.js is aware of this and has a built-in solution. So in this lecture, Robert explains how you can use other values than numbers to create neural nets.

8. Counting with neural nets

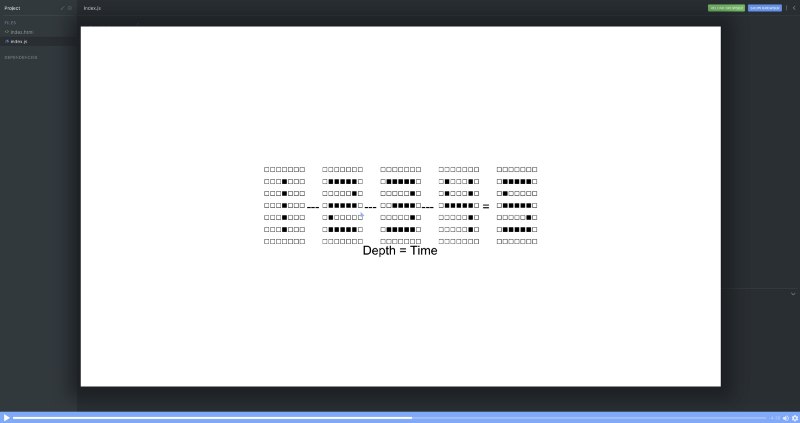

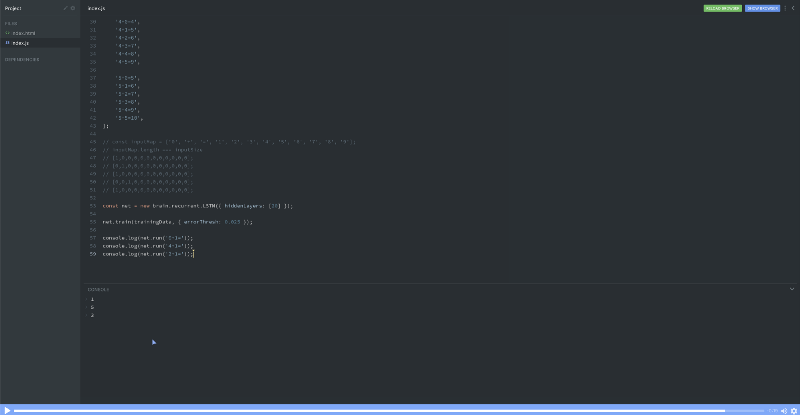

Now it’s time to get familiar with a new type of neural networks, which is so-called recurrent neural networks. It sounds very complex, but Robert teaches you to use this tool in a simple way. He uses an easy-to-understand movie-analogy to explain the concept.

He then teaches a network to count. Or in other words, the network takes a set of numbers as an input (e.g. 5,4,3) and then guesses the next number (e.g. 2) appropriately. This might seem trivial, but it’s actually a huge step towards creating machines that remember and can understand context.

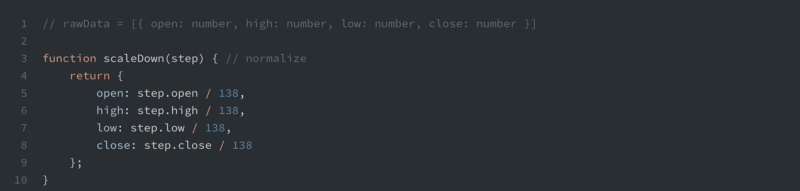

9. Stock market prediction — Normalization

Neural nets often work best with values that range around 1. So what happens when your input data is far from 1? This is a situation you’ll come into if your e.g. predicting stock prices. In such a case, you’d need to normalize the data. So in this lecture, Robert explains exactly how to do that in a simple manner.

10. Stock market prediction — Predict next

Now that we know how to normalize the data, Robert demonstrates how we can create a neural net which can predict the stock price for the following day. We’ll use the same kind of network you remember from the counting tutorial, a recurrent neural network.

11. Stock market prediction — Predict next 3 steps

But simply prediction one day in the future isn’t always enough. So in this lecture, Robert goes through the forecast method of Brain.js. It allows us to predict multiple steps in the future. This ability makes a recurrent neural network more useful in various settings.

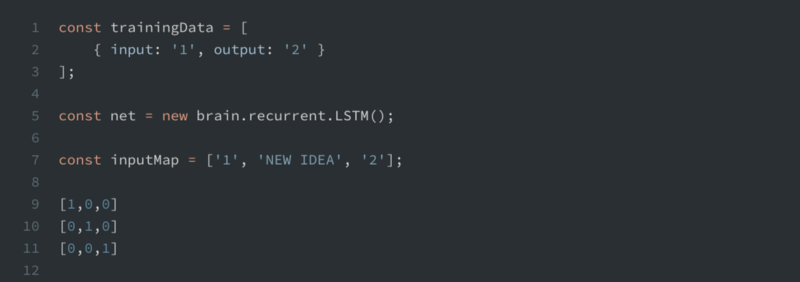

12. Recurrent neural networks learn math

In this lecture, Robert teaches a neural network to add numbers together. And he’s doing it with only inputting a bunch of strings. This screencasts also gives you a better understanding of how a recurrent neural network transforms the inputs it gets into arrays before running it.

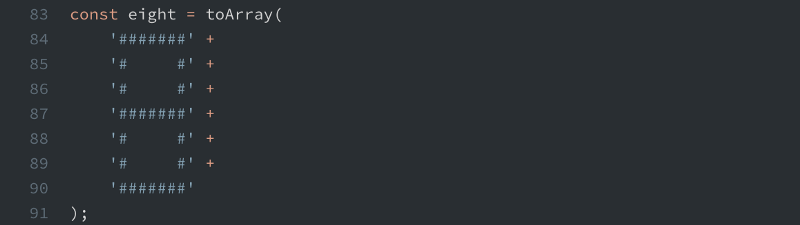

13. Lo-fi number detection

Another super-useful application for neural nets is image recognition. In this tutorial, Robert creates a neural network which can recognize ASCII-art numbers. It’s a dummy version of artificial vision.

And even though it’s very simple, it’s still dynamic in the same way a proper solution would be. Meaning you can modify the ASCII-numbers to a certain degree, and the network will still recognize which number you’re trying to give it. In other words, it’s able to generalize.

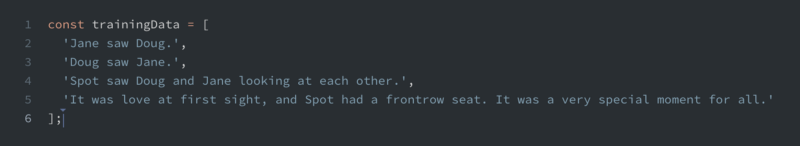

14. Writing a children's book with a recurrent net

This project is super cool. It involves training a network to write a children’s book. Again, it’s just a dummy example, but it definitely hint’s to the power of recurrent neural nets, as it starts to improvise a new sentence just by having looked at four different sentences.

If you want to get a hint of the amazing power of recurrent neural nets, check out Andrej Karpathy’s blog post on the subject.

15. Sentiment detection

A very common use-case for machine learning and neural networks is sentiment detection. This could be e.g. to understand how people talk about your company in social media. So in order to give you this tool in your toolbelt as well, Robert explains how to use an LSTM network to detect sentiments.

16. Recurrent neural networks with … inputs? outputs? How?

A recurrent neural network will translate your input data into a so-called input map, which Robert explains in this screencast. This isn’t something you’ll need to think about when using Brain.js, as it’s abstracted away from you, but it’s useful to be aware of this underlying structure.

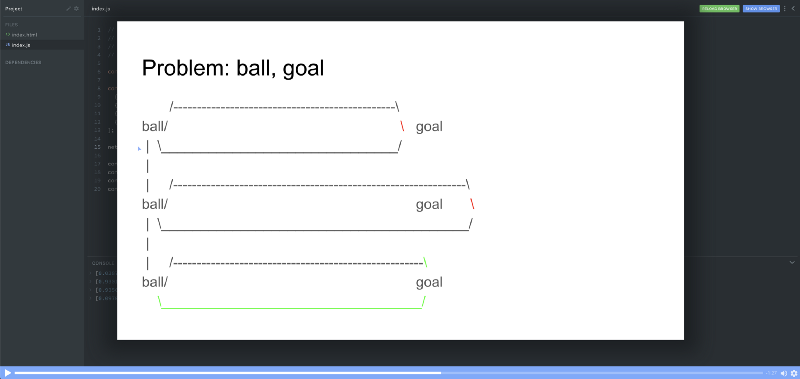

17. Simple reinforcement learning

Reinforcement learning is a really exciting frontier of machine learning, and in this lecture, you’ll get a little taste of it. In just a few minutes Robert will give you a conceptual demonstration of what reinforcement learning is, using the simplest net possible, an XOR net.

18. Building a recommendation engine

Finally, Robert ends the lectures with a recommendation engine, which learns a user’s preference for colors. Recommendation engines are used heavily by companies like Netflix and Amazon to give users more relevant suggestions, so this is a very useful subject to learn more about.

19. Closing thoughts

If you make it this far: congrats! You’ve taken the first step towards becoming a machine learning engineer. But this is actually where your journey begins, and Robert has some really interesting thoughts on how you should think about your machine learning journey going forward, and how you should use your intuition as a guide.

After watching this, you’ll be both inspired and empowered to go out into the world and tackle problems with machine learning!

And don’t forget to follow Robert on Twitter, and also thank him for his amazing Christmas gift to all of us!

Happy coding!

Thanks for reading! My name is Per Borgen, I'm the co-founder of Scrimba – the easiest way to learn to code. You should check out our responsive web design bootcamp if want to learn to build modern website on a professional level.

_

_