by Sam Jordan

We studied how students performed in technical interviews. Where they went to school didn’t matter.

interviewing.io is a platform where engineers practice technical interviewing anonymously. If things go well, they can unlock the ability to participate in real, but still anonymous, interviews with top companies like Twitch, Lyft and more.

Earlier this year, we launched an offering specifically for university students. It was intended to help level the playing field right at the start of people’s careers.

The problem

Here’s the sad truth: given the state of college recruiting today, if you haven’t attended one of a very few top schools, your chances of interacting with companies on campus are slim. It’s not fair, and it sucks, but university recruiting is still dominated by career fairs. Companies pragmatically choose to visit the same few schools every year. Despite the fact that the career fair is one of the most antiquated, biased forms of recruiting that there is, the format persists. This is likely because there doesn’t seem to be a better way to quickly connect with students at scale.

So, despite the increasingly loud conversation about diversity, campus recruiting marches on, and companies keep doing the same thing expecting different results.

In a previous blog post, we explained why companies should stop courting students from the same five schools.

Regardless of how important you think this idea is (for altruistic reasons, perhaps), you may still be skeptical about the value and practicality of broadening the college recruiting effort. You probably concede that it’s rational to visit top schools, given limited resources. Society is often willing to agree that there are perfectly qualified students coming out of non-top colleges, but they maintain that they’re relatively rare.

We’re here to show you, with some nifty data from our university platform, that this is simply not true.

To be fair, this isn’t the first time we’ve looked at whether where you went to school matters. In a previous post, we found that taking Udacity and Coursera programming classes mattered way more than where you went to school. And way back in the day, one of our founders figured out that where you went to school didn’t matter at all — but that the number of typos and grammatical errors on your resume did.

So, what’s different this time? The big, exciting difference is that these prior analyses were focused mostly on engineers who had been working for at least a few years already. This made it possible to argue that a few years of work experience smoothes out any performance disparity that comes from having attended (or not attended) a top school.

In fact, the good people at Google found that while GPA didn’t really matter after a few years of work, it did matter for college students. So, we wanted to face this question head-on and look specifically at college juniors and seniors while they were still in school. Even more pragmatically, we wanted to see if companies limiting their hiring efforts to just top schools were getting higher caliber candidates.

Before delving into the numbers, here’s a quick rundown of how our university platform works and what kind of data we collect.

The setup

For students who want to practice on interviewing.io, the first step is a brief (~15-minute) coding assessment on Qualified to test basic programming competency. Students who pass this assessment (that is, those who are ready to code while another human being breathes down their necks) get to start booking practice interviews.

When an interviewer and an interviewee match on our platform, they meet in a collaborative coding environment with voice, text chat, and a whiteboard and jump right into a technical question. Interview questions on the platform tend to fall into the category of what you’d encounter during a phone screen for a back-end software engineering role. Interviewers typically come from top companies like Google, Facebook, Dropbox, Airbnb, and more.

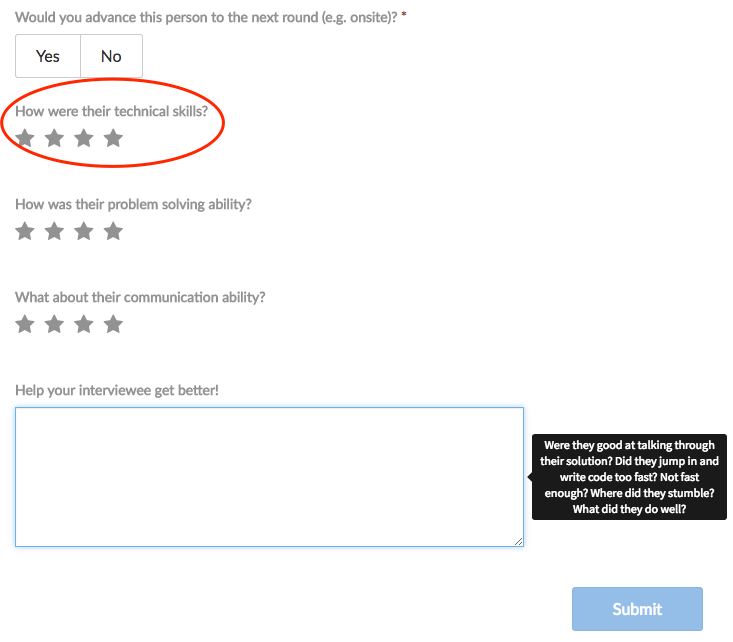

After every interview, interviewers rate interviewees in a few different categories, including technical ability. Technical ability gets rated on a scale of 1 to 4, where 1 is “poor” and 4 is “amazing!” On our platform, a score of 3 or above has generally meant that the person was skilled enough to move forward. You can see what our feedback form looks like below:

On our platform, we’re fortunate to have thousands of students from all over the U.S., spanning over 200 universities. We thought this presented a unique opportunity to look at the relationship between school tier and interview performance for both juniors (interns) and seniors (new grads).

To study this relationship, we first split schools into the following four tiers, based on rankings from U.S. News & World Report:

- “Elite” schools (like MIT, Stanford, Carnegie Mellon, UC-Berkeley)

- Top 15 schools (not including top tier, like University of Wisconsin, Cornell, Columbia)

- Top 50 schools (not including top 15, like Ohio State University, NYU, Arizona State University)

- The rest (like Michigan State, Vanderbilt University, Northeastern University, UC-Santa Barbara)

Then, we ran some statistical significance testing on interview scores vs. school tier to see if school tier mattered for both interns (college juniors) and new grads (college seniors). Our sample comprised a set of roughly 1,000 students.

Does school have anything to do with interview performance?

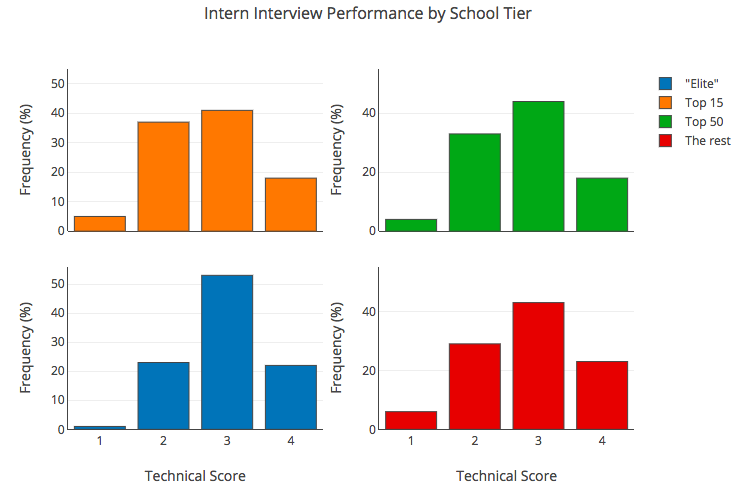

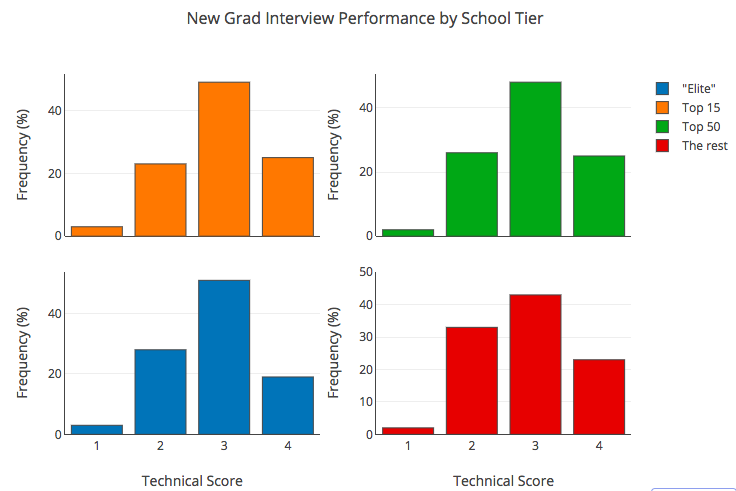

In the graphs below, you can see technical score distributions for interviews with students in each of the four school tiers (see legend). As you recall from above, each interview is scored on a scale of 1 to 4, where 1 is the worst and 4 is the best.

First, the juniors:

And then, the seniors:

What’s pretty startling is that the shape of these distributions, for both juniors and seniors, is remarkably similar. Indeed, statistical significance testing revealed no difference between students of any tier when it came to interview performance.

Just to note: of course, this hinges on everyone completing a quick 15-minute coding challenge first, to ensure they’re ready for synchronous technical interviews. We’re excited about this because companies can replicate this step in their process as well!

What this means is that top-tier students are achieving the same results as those in “no-name” schools. So the question becomes: if the students are comparable in skill, why are companies spending egregious amounts of money attracting only a subset of them?

Okay, so what are companies missing?

Besides missing out on great, cheaper-to-acquire future employees, companies are missing out on an opportunity to save time and money. Right now, a ridiculous amount of money is being spent on university recruiting. We’ve previously cited the $18k price tag just for entry to the MIT career fair. In a study done by Lauren Rivera through the Harvard Business Review, she reveals that one firm budgeted nearly 1 million dollars just for social recruiting events on a single campus.

The higher price tag of these events also means that it makes even less sense for smaller companies or startups to try and compete with high-profile, high-profit tech giants. Most of the top schools that are being heavily pursued already have enough recruiters vying for their students. Unwittingly, this pursuit seems to run contrary to most companies’ desire for high diversity and long-term sustainable growth.

Even when companies do believe that talent is evenly distributed across school tiers, there are still reasons why companies might recruit at top schools. There are other factors that help elevate certain schools in a recruiter’s mind. There are long-standing company-school relationships (for example, the number of alumni who work at the company currently). There are signaling effects, too — companies get Silicon Valley bonus points by saying their eng team is comprised of a bunch of ex-Stanford, ex-MIT ex-and so on students.

A quick word about selection bias

Since this post appeared on Hacker News, there’s been some loud, legitimate discussion about how the pool of students on interviewing.io may not be representative of the population at large. Indeed we do have a self-selected pool of students who decided to practice interviewing.

Certainly, all the blog posts we publish are subject to this (very valid) line of criticism, as is this post in particular.

As such, selection bias in our user pool might mean that 1) we’re getting only the worst students from top schools (because, presumably, the best ones don’t need the practice), or 2) we’re getting only the best/most motivated students from non-top schools — or both.

Any subset of these results is entirely possible, but there are few reasons why we believe that what we’ve published here might hold truth regardless.

First of all, in our experience, regardless of their background or pedigree, everyone is scared of technical interviewing. Case in point: before we started working on interviewing.io, we didn’t really have a product yet. So before investing a lot of time and heartache into this questionable undertaking, we wanted to test the waters to see if interview practice was something engineers really wanted — and more so, who these engineers that wanted practice were.

So, we put up a pretty mediocre landing page on Hacker News…and got something like 7,000 signups on the first day. Of these 7,000 signups, roughly 25% were senior (4+ years of experience) engineers from companies like Google and Facebook. Now, this isn’t to say that they’re necessarily the best engineers out there, but it does suggest that the engineers the market seems to value the most still needed our services.

Another data point comes from one of our founders. Every year, Aline does a guest lecture on job search preparedness for a technical communication course at MIT. This course is one way to fulfill the computer science major communication requirement, so enrollment tends to span the gamut of computer science students. Before every lecture, she sends out a survey asking students what their biggest pain points are in preparing for their job search. Every year, trepidation about technical interviewing is either at the top of the list of 2nd from the top.

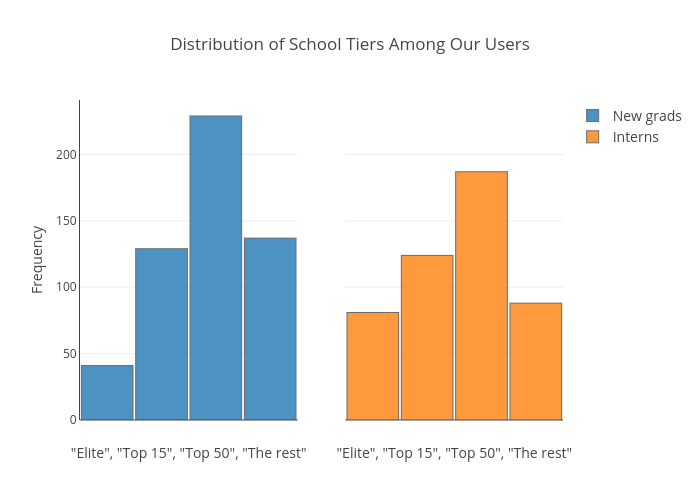

And though this doesn’t directly address the issue of whether we’re only getting the “best of the worst or the worst of the best” (and I hope the above has convinced you there’s more to it than that), here’s the distribution of school tiers among our users. I expect it mirrors the kinds of distributions companies see in their student applicant pool as well:

So what can companies do?

Companies may never stop recruiting at top-tier schools entirely. But they ought to at least include schools outside of that very small circle in the search for future employees.

The end result of the data is the same: for good engineers, the school they attended means a lot less than we think. The time and money that companies spend to compete for candidates within the same select few schools would be better spent creating opportunities that include everyone. They could also develop tools to vet students more fairly and efficiently.

As you saw above, we used a 15-minute coding assessment to cull our inbound student flow, and just a short challenge leveled the playing field between students from all walks of life. At the very least, we’d recommend employers do the same thing in their process. But, of course, we’d be remiss if we didn’t suggest one other thing.

At interviewing.io, we’ve proudly built a platform that grants the best-performing students access to top employers, no matter where they went to school or where they come from. Our university program, in particular, allows us to grant companies the privilege to reach an exponentially larger pool of students, for the same cost of attending one or two career fairs at top target schools.

Want diverse, top talent without the chase? Sign up to be an employer on our university platform!