Illustration (and most in this article) by Adit Bhargava

Illustration (and most in this article) by Adit Bhargava

Big O notation is used to communicate how fast an algorithm is. This can be important when evaluating other people’s algorithms, and when evaluating your own! In this article, I’ll explain what Big O notation is and give you a list of the most common running times for algorithms using it.

Algorithm running times grow at different rates

My son explains big ‘O’ notation.

My son explains big ‘O’ notation.

My son Judah has a lot of toys. In fact, he has acquired a billion toys! You’d be surprised how quickly a kid can get that many toys if he’s the first grandchild on both sides of the family. ??

Anyway, Judah has a problem. When his friends visit and want to play with a specific toy, it can take FOREVER to find the toy. So he wants to create a search algorithm to help him find a specific toy as quick as possible. He is trying to decide between two different search algorithms: simple search and binary search. (Don’t worry if you are not familiar with these algorithms.)

The algorithm chosen needs to be both fast and correct. On one hand, binary search is faster. And Judah often only has about 30 seconds before his friend gets bored looking for a toy. On the other hand, a simple search algorithm is easier to write, and there is less chance of bugs being introduced. It sure would be embarrassing if his friend found bugs in his code! To be extra careful, Judah decides to time both algorithms with a list of 100 toys.

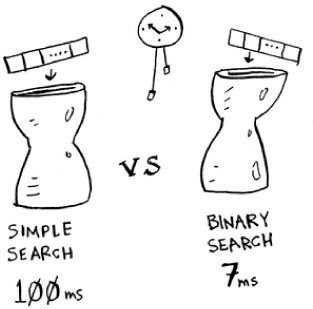

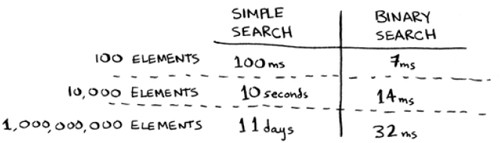

Let’s assume it takes 1 millisecond to check one toy. With simple search, Judah has to check 100 toys, so the search takes 100 ms to run. On the other hand, he only has to check 7 toys with binary search (log2 100 is roughly 7, don’t worry if this math is confusing since it isn’t the main point of this article), so that search takes 7 ms to run. But really, the list will have a billion toys. If it does, how long will simple search take? How long will binary search take?

Running time for simple search vs. binary search, with a list of 100 elements

Judah runs binary search with 1 billion toys, and it takes 30 ms (log2 1,000,000,000 is roughly 30). “32 ms!” he thinks. “Binary search is about 15 times faster than simple search, because simple search took 100 ms with 100 elements, and binary search took 7 ms. So simple search will take 30 × 15 = 450 ms, right? Way under the 30 seconds it takes for my friend to get bored.” Judah decides to go with simple search. Is that the right choice?

No. Turns out, Judah was wrong and lost a friend for life. ? The run time for simple search with 1 billion items will be 1 billion ms, which is 11 days! The problem is, the run times for binary search and simple search don’t grow at the same rate.

Run times grow at very different speeds! As the number of items increases, binary search takes a little more time to run, but simple search takes a lot more time to run. So as the list of numbers gets bigger, binary search suddenly becomes a lot faster than simple search.

So Judah was wrong about binary search always being 15 times faster than simple search. If there are 1 billion toys, it’s more like 33 million times faster.

It is very important to know how the running time increases as the list size increases. That’s where Big O notation comes in.

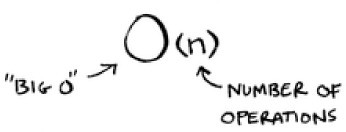

Big O notation tells you how fast an algorithm is. For example, suppose you have a list of size n. Simple search needs to check each element, so it will take n operations. The run time in Big O notation is O(n).

Where are the seconds? There are none — Big O doesn’t tell you the speed in seconds. Big O notation lets you compare the number of operations. It tells you how fast the algorithm grows.

Let’s do another example. Binary search needs log n operations to check a list of size n. What’s the running time in Big O notation? It’s O(log n). In general, Big O notation is written as follows.

This tells you the number of operations an algorithm will make. It’s called Big O notation because you put a “big O” in front of the number of operations.

Big O establishes a worst-case run time

Suppose you’re using simple search to look for a user in your user database. You know that simple search takes O(n) time to run, which means in the worst case, you’re algorithm will have to look through every user in the database. In this case, you’re looking for a user with the name ‘aardvark213’. This is the first user in the list. So your algorithm didn’t have to look at every entry — it found it on the first try. Did the algorithm take O(n) time? Or did it take O(1) time because it found the person on the first try?

Simple search still takes O(n) time. In this case, the algorithm found what it was looking for instantly. That’s the best-case scenario. But Big O notation is about the worst-case scenario. So you can say that, in the worst case, the algorithm will have to look through every user in the database once. That’s O(n) time. It’s a reassurance — you know that simple search will never be slower than O(n) time.

Some common Big O run times

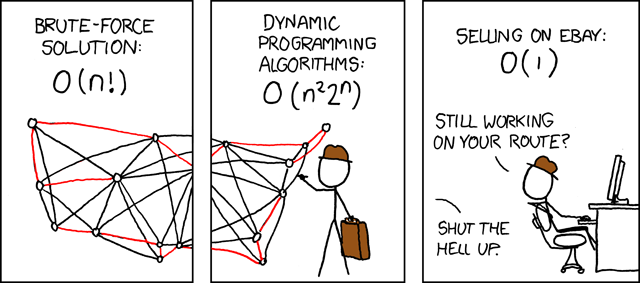

_From [xkcd](https://xkcd.com/399/" rel="noopener" target="blank" title="). If you don’t get the joke, learn more about the traveling salesman problem in my course from Manning Publications. :)

_From [xkcd](https://xkcd.com/399/" rel="noopener" target="blank" title="). If you don’t get the joke, learn more about the traveling salesman problem in my course from Manning Publications. :)

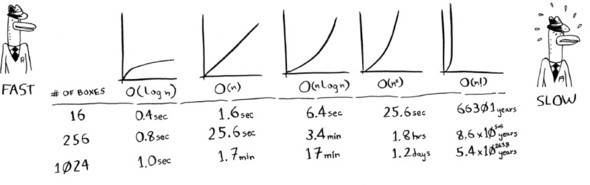

Here are five Big O run times that you’ll encounter a lot, sorted from fastest to slowest:

- O(log n), also known as log time. Example: Binary search.

- O(n), also known as linear time. Example: Simple search.

- O(n * log n). Example: A fast sorting algorithm, like quicksort.

- O(_n_2). Example: A slow sorting algorithm, like selection sort.

- O(n!). Example: A really slow algorithm, like the traveling salesperson.

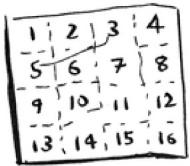

Visualizing different Big O run times

Suppose you’re drawing a grid of 16 boxes, and you can choose from 5 different algorithms to do so. If you use the first algorithm, it will take you O(log n) time to draw the grid. You can do 10 operations per second. With O(log n) time, it will take you 4 operations to draw a grid of 16 boxes (log 16 base 2 is 4). So it will take you 0.4 seconds to draw the grid. What if you have to draw 1,024 boxes? It will take you log 1,024 = 10 operations, or 1 second to draw a grid of 1,024 boxes. These numbers are using the first algorithm.

The second algorithm is slower: it takes O(n) time. It will take 16 operations to draw 16 boxes, and it will take 1,024 operations to draw 1,024 boxes. How much time is that in seconds?

Here’s how long it would take to draw a grid for the rest of the algorithms, from fastest to slowest:

There are other run times, too, but these are the five most common.

This is a simplification. In reality you can’t convert from a Big O run time to a number of operations this neatly, but this is good estimation.

Conclusion

Here are the main takeaways:

- Algorithm speed isn’t measured in seconds, but in growth of the number of operations.

- Instead, we talk about how quickly the run time of an algorithm increases as the size of the input increases.

- Run time of algorithms is expressed in Big O notation.

- O(log n) is faster than O(n), but it gets a lot faster as the list of items you’re searching grows.

And here is a video that covers a lot of what is in this article and more.

I hope this article brought you more clarity about Big O notation. This article is based on a lesson in my video course from Manning Publications called Algorithms in Motion. The course is based on the amazing book Grokking Algorithms by Adit Bhargava. He’s the one who drew all the fun illustrations in this article.

If you learn best through books, get the book! If you learn best through videos, consider buying my course. You can get 39% off my course by using the code ‘39carnes’.