Are you tired of all the AI talk yet?

I am too, but I'm also intrigued by the technology behind it.

If you'd like to dive into some of the details behind how AI actually works and take a break from the many creepy AI examples on the internet, check this out:

Google has released a free set of training courses for generative AI. Here's their announcement post.

Picture for generative AI courses

Picture for generative AI courses

Video Walkthrough

I've made a short video of the coursework if you'd prefer a visual walkthrough of my first impressions:

Overview

There are 10 courses in this free learning path. Many are aimed at beginners with no prerequisite knowledge. Some suggest a background in Python, SQL, and/or machine learning. All of them deliver content through a combination of videos, articles, labs and quizzes.

There are badges to show that you've completed them, and I'm happy to see a freely available set of courses around the technology behind AI.

Screenshot of Google course badge

Screenshot of Google course badge

The amount of content produced by and highlighting AI is overwhelming right now, but it's a safe bet that this is going to stick around. As such, it's best to arm yourselves with as much information about it as you can so that you can responsibly create tools and content for the future.

Courses Material

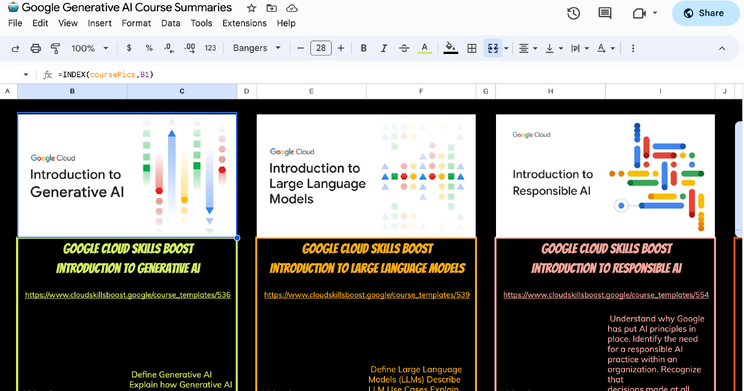

I've created a Google Sheet with all the course info pulled from each of these courses. Check it out here. Google has done a fine job of creating a brief FAQ for each of the courses, and I've pulled them all into one sheet for easy comparison.

Screenshot of Google Sheet summary of all courses

Screenshot of Google Sheet summary of all courses

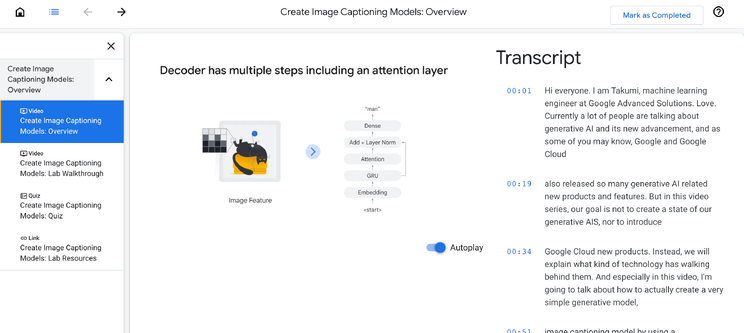

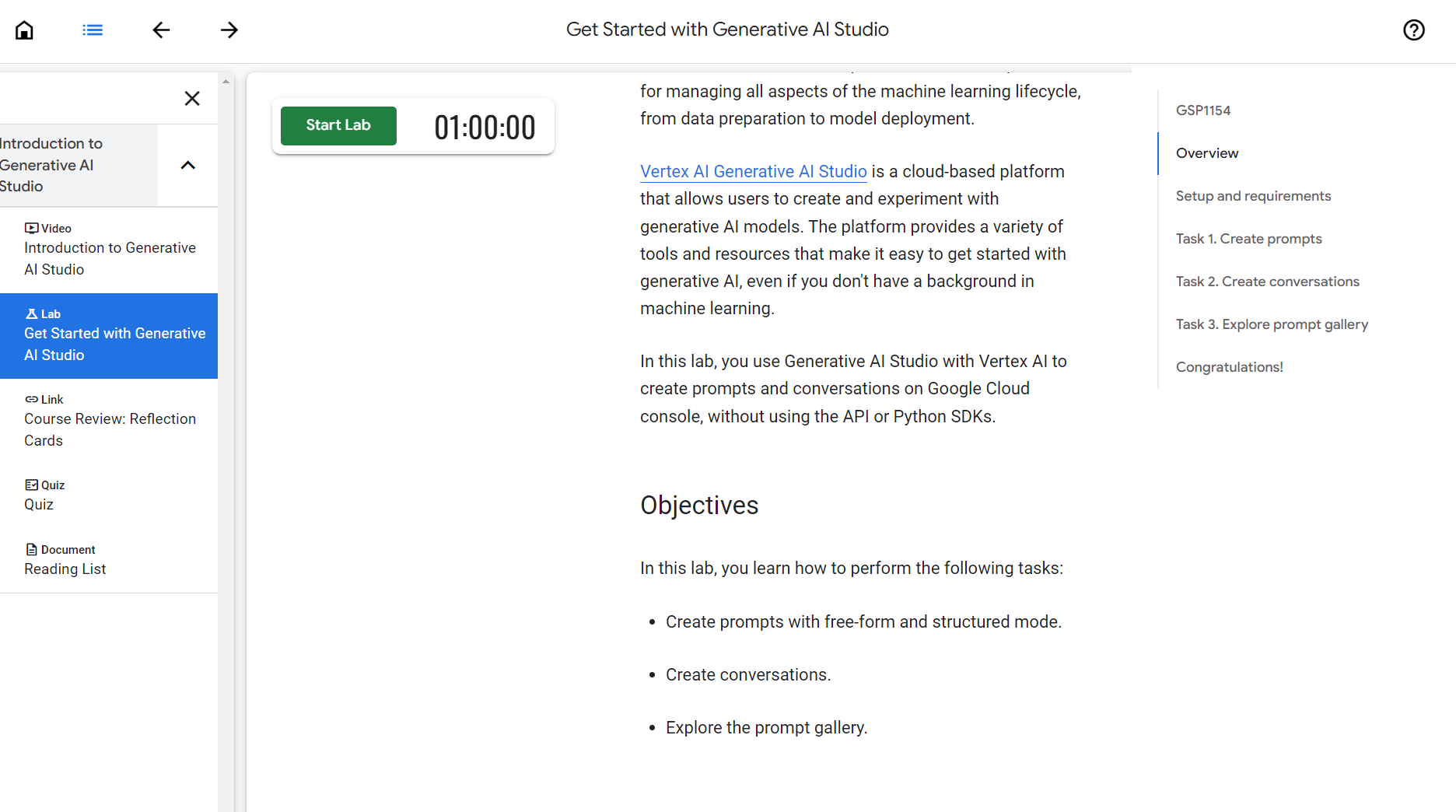

Inside each course, you'll be presented with a straightforward navigation for the content. It's broken into video instruction, a curated collection of readings to peruse, and quizzes. Some courses also have online labs to complete in a certain amount of time.

Video content for the Create Image Captioning Models course

Video content for the Create Image Captioning Models course

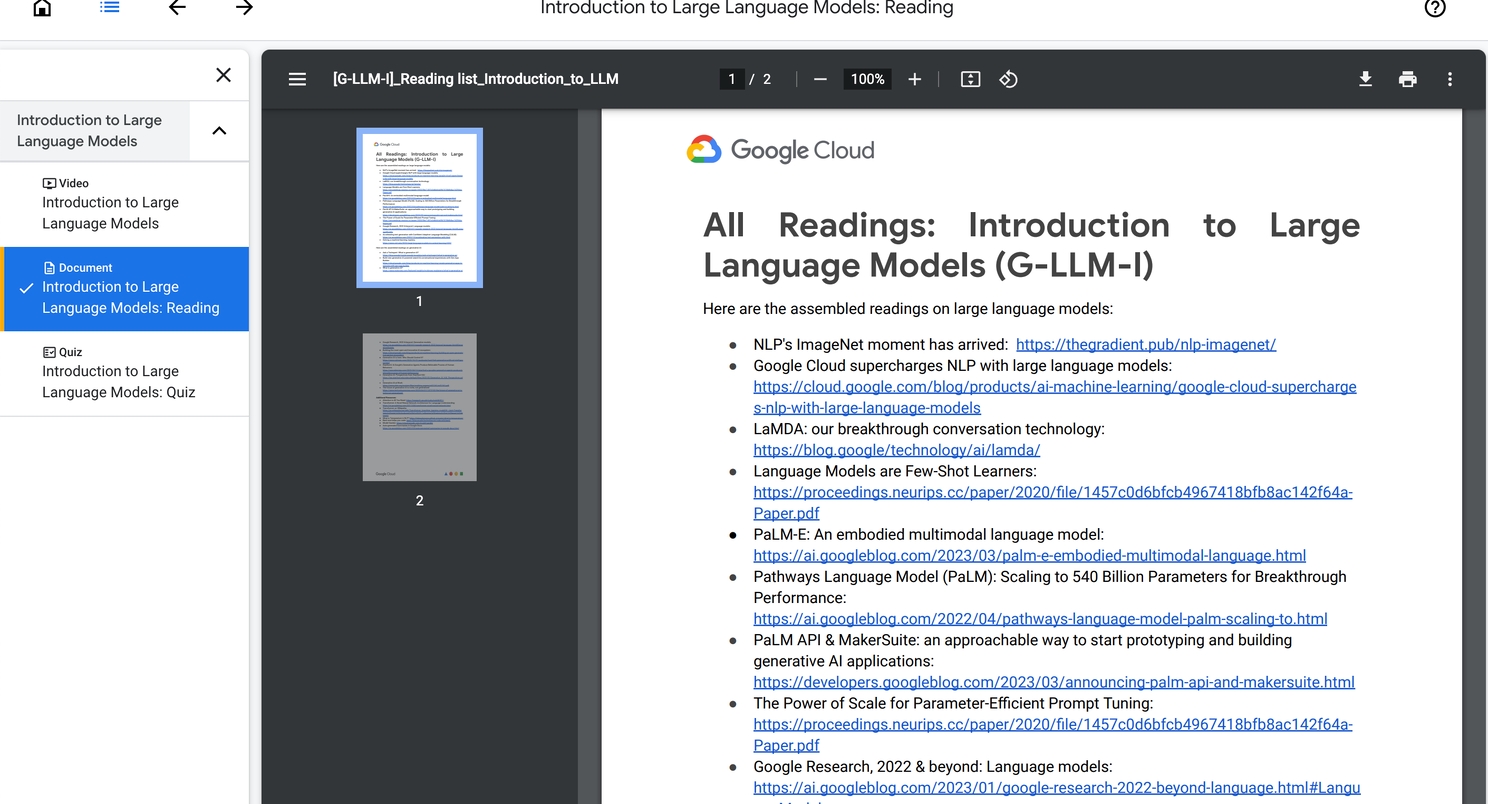

The curated list of readings is quite comprehensive, and you could easily exceed the estimated 45 minutes of time to complete the modules if you read through every one. But it is nice to have such a large list of resources compiled in one place.

The list of readings for the Intro to Large Language Models course

The list of readings for the Intro to Large Language Models course

There are also Labs hosted in some of the courses. If you've taken any of Google's Coursera courses, you'll be familiar with this delivery method. You're given a handful of tasks and a countdown timer, in this case 1hr, will begin when you start the lab.

Screenshot of lab work

Screenshot of lab work

Here is the full list of courses:

- Introduction to Generative AI – designed to be an overview of what generative AI is and how it differs from machine learning methods.

- Introduction to Large Language Models – explores what large language models are, where they are used, and how to use prompt tuning. (If you haven't noticed, prompt writing is being touted as a skill of the future right now.)

- Introduction to Responsible AI – an ethical course on what responsible AI is, how it's implemented in Google products, and why it's important. This course introduces Google's 7 AI Principles. I didn't know this was a thing, but there's a whole page devoted to it. Covering topics from social responsibility, to safety, accountability and privacy design principles, I was happy to see that there is large effort being paid to build in solid principles.

- Generative AI Fundamentals – A quiz covering topics from the first three courses.

- Introduction to Image Generation – An introduction to diffusion models which are a family of models used in image generation. Some pre-existing knowledge of machine learning, deep learning, convolutional neural nets and/or Python programming is suggested.

- Encoder-Decoder Architecture – overview of a machine learning architecture for tasks like machine translation, text summarization, and question answering. Python and Tensorflow knowledge is suggested as a prerequisite.

- Attention Mechanism – a technique that allows neural networks to focus on specific parts of an input sequence. Some pre-existing knowledge of machine learning, deep learning, natural language processing, and/or Python programming is suggested.

- Transformer Models and BERT Model – Bidirectional Encoder Representations from Transformers...this is what BERT stands for in case you didn't know. You'll learn the main components of the Transformer architecture and intermediate machine learning experience as well, and knowledge of Python and TensorFlow are recommended.

- Create Image Captioning Models – how to create an image captioning model using deep learning. Deep learning, machine learning, natural language processing, computer vision and Python are recommended prerequisites.

- Introduction to Generative AI Studio – you'll walk through demos of the Generative AI Studio which helps prototype and customize generative AI models. There is a hands-on lab at the end.

My Thoughts

After spending some time browsing through all of these courses, it's a nice mix of truly beginner-friendly content and some intermediate level stuff that requires previous knowledge of machine learning, Python, deep learning, and/or natural language processing.

I appreciate how there are answers to many common questions in the drop down menus for each course (and I've compiled all of those in one place in this Google Sheet).

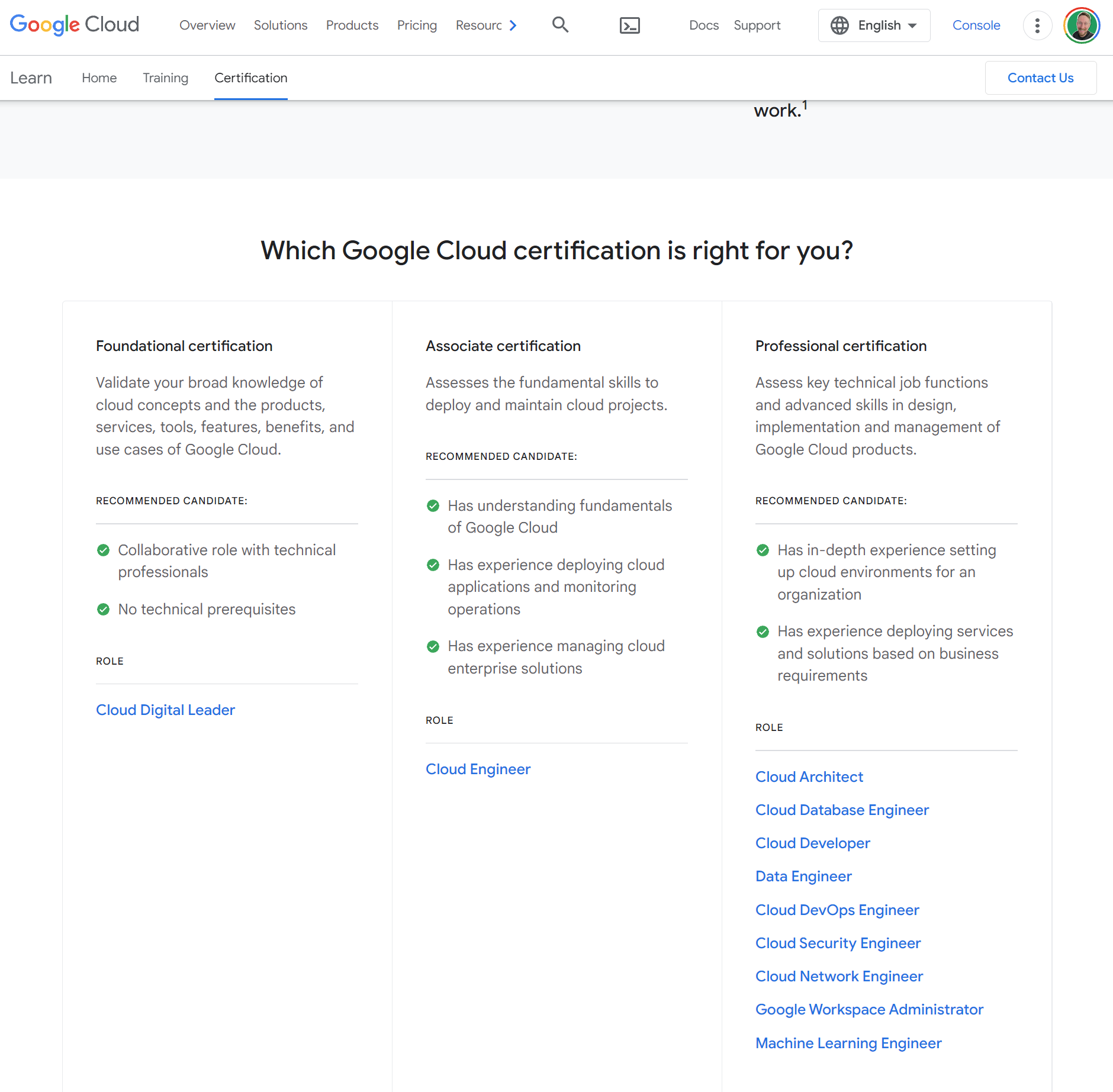

Screenshot of Google Cloud certifications

Screenshot of Google Cloud certifications

This is a great starting point if you are truly interested in the inner-workings of AI. It also looks to be a potential on-ramp to some of Google Cloud's larger certifications as there are links to further training sprinkled in the courses.

What do you think?

👋My name is Eamonn Cottrell, and I'm building content around Google Sheets & Workspace.

Get my 📨 newsletter here. Find me on 📺 YouTube here.

Please ask questions in the comments, or send me a message; I love making new spreadsheet connections!