by Tiffany Eaton

Learning the basics of Conversational UI with a UX Designer for Amazon’s Alexa

During my internship at Intuit last summer, I was part of a hackathon team that created a conversational UI experience. We interviewed a few people who had experience in this field, and I had the opportunity to interview Angela Nguyen. Angela is a UX designer at Amazon, working on the Alexa Team. You can view her work here.

What is your process for designing conversational user interfaces?

It depends on the conversation. I work mostly with shopping questions, because I am on shopping team.

My process always starts with the customer and what their needs are.

For example, if they are trying to buy paper towels, then the first thing I do is think about the AI’s response. Is it going to return paper towels based on having already purchased it or the present situation? Where are they making this order, or are they able to make an order via credit card? It depends on the criteria of this person, because every person asking the same question can result in the AI answering a different way.

Every situation is different for every person.

Let’s say a given person always buys paper towels, and maybe it’s the same towels. I like to create a tree of answers (“mind mapping”). It starts with that one question, depending on the circumstance of this person. This person bought this towel many times, so Alexa might respond with: “Do you want to buy paper towels again?” If you never bought a towel, the AI might recommend a brand.

How do you design a UI designed for voice or chatbots?

There are headless devices, voice products that don’t have a face or UI. This is completely voice UX. Then there are “face devices” which have a screen, kind of like Siri.

When designing for products with a face, it is good to consider the question, “Do you want to show the same graphics as the voice is saying?” If a voice says, I have paper towels that are $10 and delivered in 2 hours, will it have the image, price, etc or just the image? It is just good to consider if a person is viewing something.

Can we simplify the graphics or voices?

A good example of this is YouTube. If you ever watch a video and you land on the page, the video starts playing, but you can still scroll down to the videos while watching the main one.

While your brain is comprehending the audio, your eyes are taking in other information. The human brain can multi-task, so when we hear audio and are given visuals, they don’t have to match. You can be listening and looking at comments at the same time. You can consider this when designing UI and voice. You don’t have to show the things you are saying.

What would you say the visual representation of text looks like with some of the products you have been working on?

The UI might be a picture of a paper towel whereas the voice would say, “This is Amazon’s choice for paper towels. This is the top result, most highly rated, it costs $10. You can get it at noon.” The voice would explain in more detail, whereas graphics are what you would look at.

How do you prototype with conversational UI?

The most basic way is to do a graphic prototype, such as an app or website. It is exactly the same process as UX design, but you add voice on top of the animation. When testing it with people, you can ask them, “How would you speak with the device?”, along with having them touch and play with it.

You can get insights through how people react or talk about something. An example is asking about the weather. They could ask, “What is the weather?” versus “What is the temperature?” Once they communicate with the AI, you can play back the animation you have prototyped, and you can look at their reactions and interactions with the prototype.

There are a lot of things to keep in mind. For example, what should the user say? Do they need to raise their voice? What if they only use gestures, or just voice and no gestures? Is the information too much, too little, too short?

There is also response speed. With voice, there are so many things to consider. If you are dealing with tech, you need to account for things like latency. How long does it take for the AI to respond before there is something wrong with it?

What places do you see conversational UI being used, and what are the implications behind those choices?

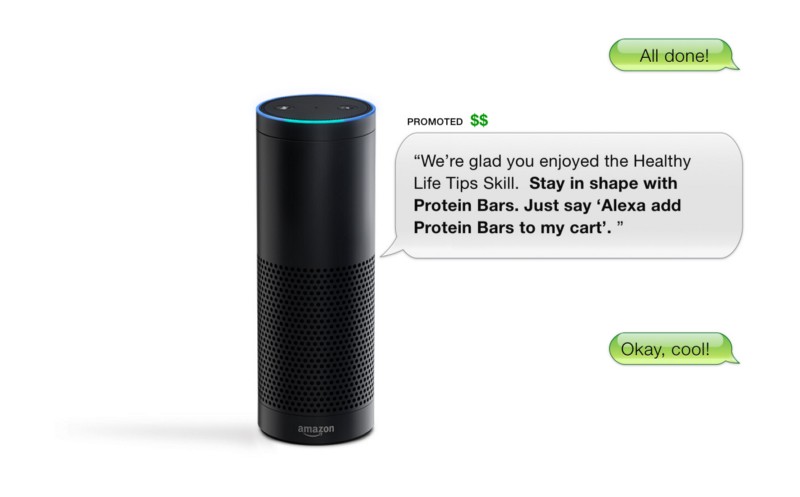

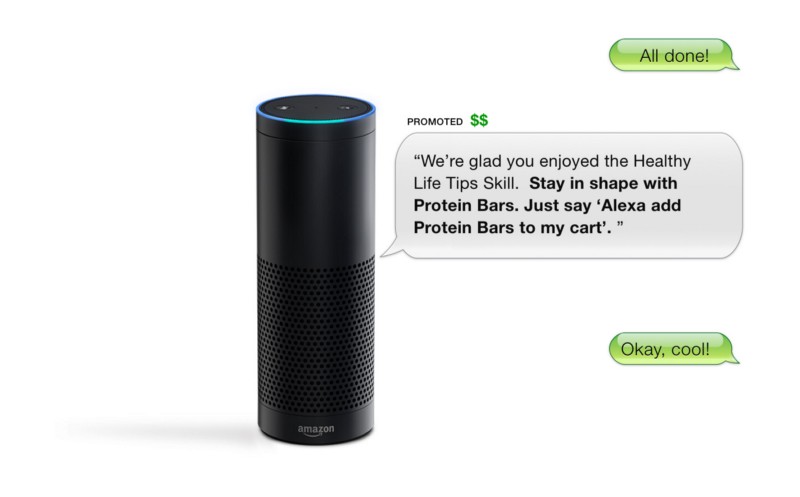

Right now, conversational UI is very new. People are still afraid to talk to devices. I talked to someone today about their experience with their Echo for the first time. She was explaining her first reactions to it. She thought it was strange talking about something that wasn’t there.

In a recent article about Alexa and the Home Pod, it is going to be awkward talking to the Home Pod because you have to say “Siri”. It is a robot and it is golden utterance to talk to this device. Alexa is more conversational as if you are talking to a person.

The goal is to try to make people forget the a device is a robot, but rather a human. This is because people are scared of tech. For example, when the computer came out, people were like “What, you don’t have to go to the library anymore?”. We didn’t have these things for centuries, kind of like when Uber came out. You are basically calling a person from the internet and they show up.

Change in tech freaks people out.

As a designer, if we can make that transition for people a lot easier, then people will have an easier time responding to AI and adopting new technology. In terms of usage, it is for convenience. Like being able to ask a device about a schedule or cooking, or if there a way to set a table or ask about measurements (from cups to millimeters).

Where do you see the future of conversational UI going and what are your thoughts behind this?

Conversational UI will be as if a human is following you.

Quite honestly, I think it will start to become part of everyday life. So when you asked where do you see conversational UI now, I said it was placed in areas of convenience and in hubs where people put the AI, such as in the kitchen. Conversational UI will be wherever you are. The moment you wake up, the moment you get into the car. It will be the same moment. It will start to be seamless when we move from location to location.

Check out my Skillshare Course on UX research and learn something new!

To help you get started on owning your design career, here are some amazing tools from Rookieup, a site I used to get mentorship from senior designers. If you use the links I provided below, you get a discount:

- Build a portfolio with help from an experienced designer

- Essential tools to strengthen and build your portfolio

- Take control of your time and career by becoming a freelancer

- Tips and tricks to get an amazing design job

Links to some other cool reads:

- The Downfall of Design Teams and Products- How to Recover and Facilitate Inclusive Design Culture

- How writing 106 articles in a year has helped me grow as a designer

- I interviewed at Facebook as a new grad. Here is what I learned about design

- Standing Up as a Design Leader

- Journey Mapping is the Key to Gaining Empathy

- UX is Grounded in Rationale, not Design